-Mark Haeussler, CEO

Leaders – anyone – have the potential for moments of extraordinary brilliance, and moments of astonishing stupidity. We like to be seen as smart, quick-learners, self-reflective, and humble. Must of us cringe when we make mistakes (at least internally) – so much so, that our brains often cover them up.

Yes, humans are quite capable of errors, poor reasoning, jumping to conclusions, and so on. Even people brilliant in one area can overlook flawed thinking in another. You see, the human mind can, and does, make mistakes, and then tricks itself into believing that it is correct. If you do not think that statement applies to you, you already are in a cognitive bias. Psychologists have been studying human logic traps, cognitive biases, and fallacies for a long time, and have found that we routinely make these mistakes. Of course, not you, but people you know (such a statement is an example of cognitive biases). There are somewhere between 175 and 200 cognitive biases and traps that have been identified. Yikes!

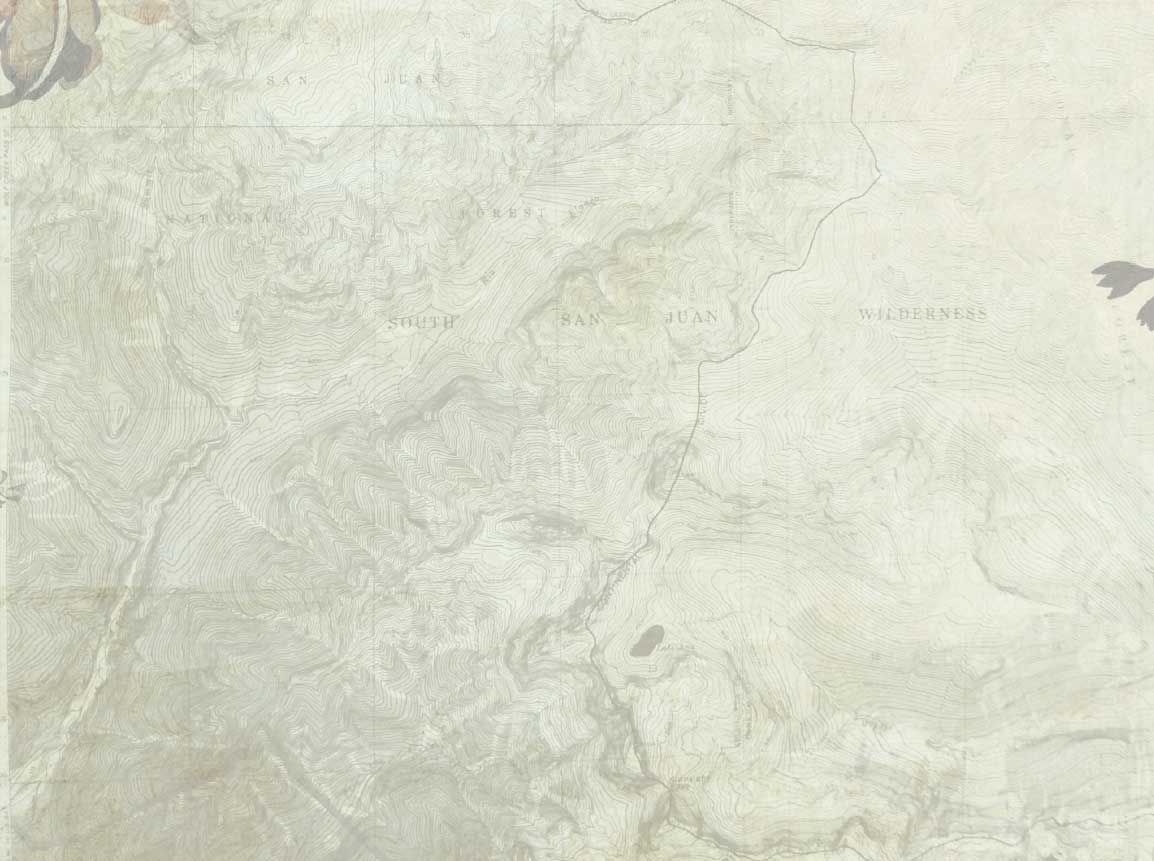

One, common and unwelcomed bias is the Dunning-Kruger Effect, named for the social scientists, aka, Mount Stupid (the shape of the chart below that reflects the logical fallacy of reaching false expertise after quick insight). The Mount Stupid moment (I’m so great) occurs early when we have great confidence while we barely know a subject. It’s easy to reach Mount Stupid: We attend one presentation at a conference, read one book, or have a ten-minute chat with someone who says things that we like to hear. “A little knowledge is a dangerous thing,” a teacher once told me. Social scientists agree with her.

Dunning-Kruger goes something like this: (1) I gain a new insight, I quickly believe I understand it, and hastily apply it. (2) I realize that my understanding was shallow and cursory, and that becoming an expert is going to take time, resources, and commitment (3) I commit to deep understanding, seek feedback, and engage in regular practices to become a true expert. Unfortunately, many people never progress to step 2. Martin Luther King summarized step one this way: “Nothing in the world is more dangerous than sincere ignorance and conscientious stupidity.”

The antidote? Building diverse teams, creating an environment that welcomes courageous conversations, reading books contrary to our current thinking, attending conferences and classes outside our area of comfort and confidence, never thinking that an idea we first hear is not solely brilliant nor stupid, and seeing our own filters more clearly. Learning more about biases, engaging in executive coaching, critical thinking partners, and building an environment that welcomes and invites direct feedback are essential. Expertise in outsmarting our biases takes humility, feedback, and practice.